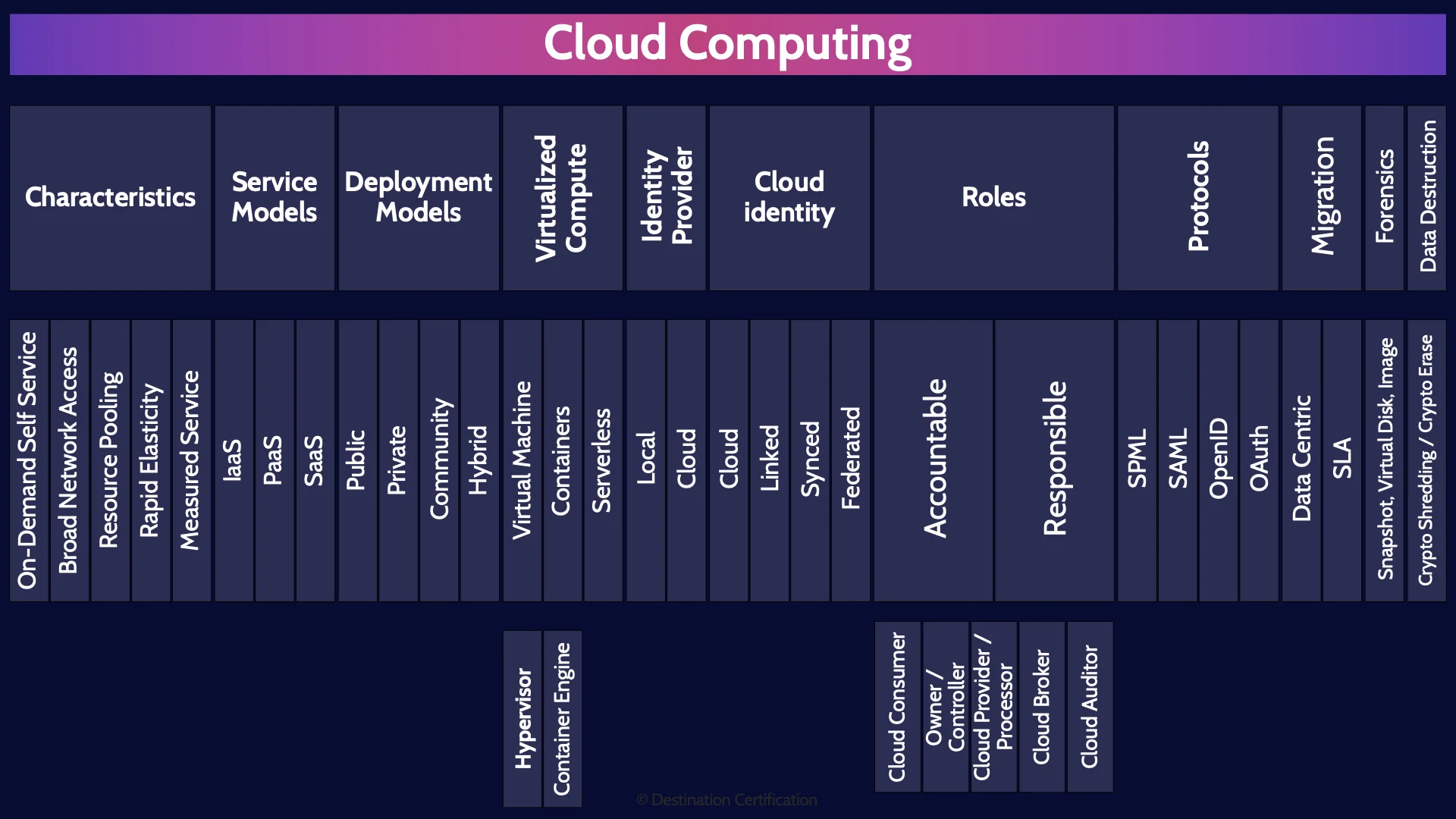

CISSP Domain 3 - Cloud Computing MindMap

Download FREE Audio Files of all the MindMaps

and a FREE Printable PDF of all the MindMaps

Your information will remain 100% private. Unsubscribe with 1 click.

Transcript

Introduction

Hey, I’m Rob Witcher from Destination Certification, and I’m here to help YOU pass the CISSP exam. We are going to go through a review of the major topics related to Cloud Computing in Domain 3, to understand how they interrelate, and to guide your studies.

This is the fifth of 9 videos for domain 3. I have included links to the other MindMap videos in the description below. These MindMaps are one part of our complete CISSP MasterClass.

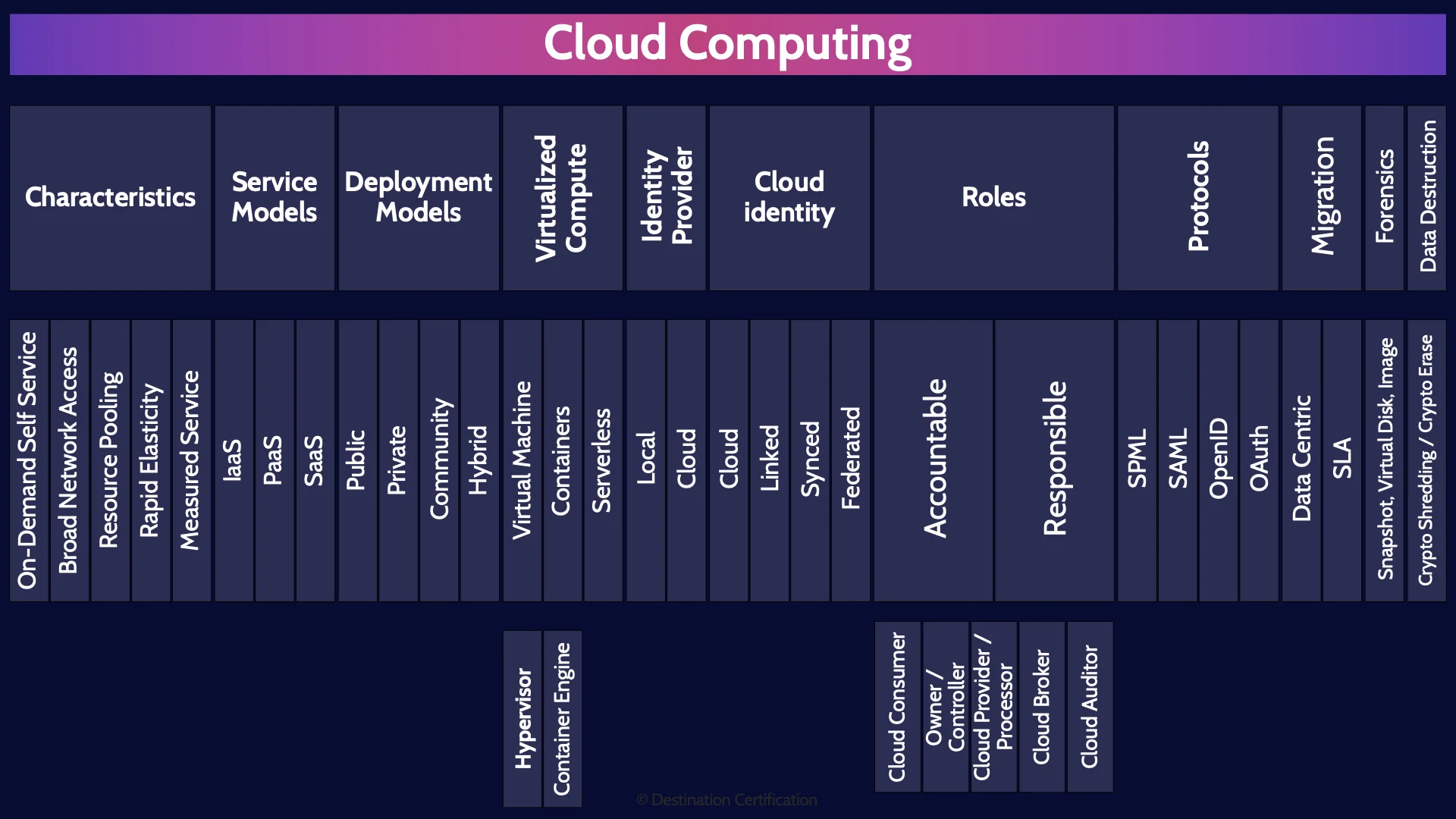

Cloud Computing

Cloud Computing. Everybody and their dog is moving their infrastructure, applications, and data to the cloud and innovating with emerging technologies like AI, blockchain and IoT. Since March 2006 when the first cloud service appeared, Amazon’s Simple Storage Service – good old S3 Buckets – cloud has quickly become a massive part of most organizations’ IT infrastructure, if not the dominant part.

You would therefore expect that the CISSP exam would have a large percentage of questions dedicated to cloud, but that is not the case. You will certainly see questions related to cloud security on the exam, but the reason there are relatively few questions related to cloud security, despite its importance to organizations, is because ISC2 created a separate certification focused on cloud security, the CCSP: certified cloud security professional.

So, let’s go through some of the basics of cloud security you need to know for the CISSP exam.

Characteristics

We’ll begin with defining what is cloud computing. It’s actually remarkably difficult to come up with a nice simple definition of what is cloud computing, because the cloud is many different things to many different people. It might be some servers you’re running in your own datacenter that just you have access to, it might be someone else’s application that you’re just renting access to, it might be running a container somewhere, or a Federated Identity Management solution as a service. So how do we define what is cloud computing? A good method the industry has come up with is to define a set of characteristics. If a service complies with these characteristics, then it is probably cloud.

On-Demand Self Service

The first characteristic is On-Demand Self Service which means users can request services and sophisticated software at the cloud service provider automatically provisions the service – usually within a matter of milliseconds or seconds. This is a huge shift from how things were done traditionally. It used to be if you wanted, say, a new server provisioned in a large company you had to submit a 17 page form to IT, and it had to be approved in triplicate, and then you had to wait 4-6 months for the server to be set up. On-demand self-service means basically anyone can request incredibly powerful services and have them provisioned nearly instantly. This is remarkably helpful if you want to innovate, experiment and fail fast. The dark side, however, of On-demand Self Service is shadow IT.

Broad Network Access

The next defining characteristic is Broad Network Access which means access to cloud resources are available from multiple device types and from multiple locations. Basically, the primary way we access cloud services is through a web browser across a network. And everything has a web browser built into it and internet access nowadays, so you can access cloud services from basically everywhere.

Resource Pooling

The next defining characteristic is a really important one: Resource Pooling. There are 3 primary resources you need to do anything from a technology perspective:

First, compute: the ability to fetch instructions, decode them, execute them, and store the results. Essentially the ability to execute code.

Second resource is storage: you need to be able to store a bunch of bits somewhere – the data and code.

And the third resource is network: you need to be able to move a bunch of bits around everywhere.

These are the 3 primary resources you need to do anything in the cloud: compute, storage and network. And a major defining characteristic of cloud is that these 3 primary resources are pooled and shared. As a user of cloud services you don’t typically have direct physical access to any of these resources. You’re not directly accessing a CPU, or directly accessing a hard drive, or directly sending your traffic to a physical switch or a router. Instead, there is a layer of virtualization between the user and the resources. So instead of directly accessing a physical CPU in a physical server, you are using a virtual machine or a container, or instead of accessing a physical hard drive you’re using a virtual disk or object storage, or instead of accessing a physical switch you’re using a virtual switch. This layer of virtualization allows all the resources of the cloud to be pooled, to be shared amongst users much more effectively.

Resource pooling is what makes it so easy to provision new services near instantly or rapidly increase or decrease your usage of compute, storage and network.

Rapid Elasticity

Which brings us to the fourth characteristic: Rapid Elasticity and Scalability. You have the ability in the cloud to quickly provision and deprovision resources. If you need access to a bunch of additional bandwidth: no problem you can rapidly gain access to vast amounts of additional bandwidth. Or if you need terabytes of additional storage: no problem. And you can also just as easily deprovision. So you can rapidly increase and decrease your usage of cloud services.

Measured Service

And the final characteristic is Measured Service which means the cloud service provider is closely monitoring your usage of the cloud and you only pay for what you use. Resource usage is monitored and reported to the consumer, providing visibility and transparency of rates and costs.

So that’s the five characteristics of cloud computing.

Service Models

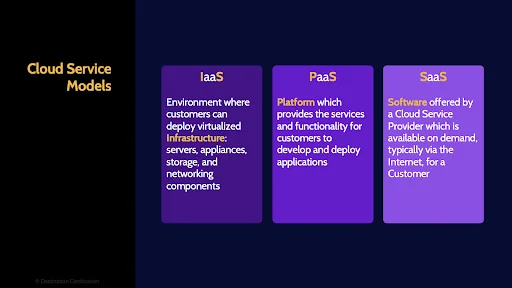

Let’s now talk about the three most common cloud service models – essentially the most common types of cloud computing.

IaaS

Infrastructure as a Service is an environment where customers can deploy virtualized Infrastructure: servers, appliances, storage, and networking components. Basically, allowing a customer to recreate an entire physical data center as virtualized components: virtual firewalls, virtual routers, virtual servers, and so forth.

PaaS

Platform as a Service provides the services and functionality for customers to develop and deploy custom applications. Customers can create their own custom applications without having to worry about all the underlying complexity like servers, and the network and storage.

SaaS

And Software as a Service is where a customer can rent access to an application hosted in the cloud.

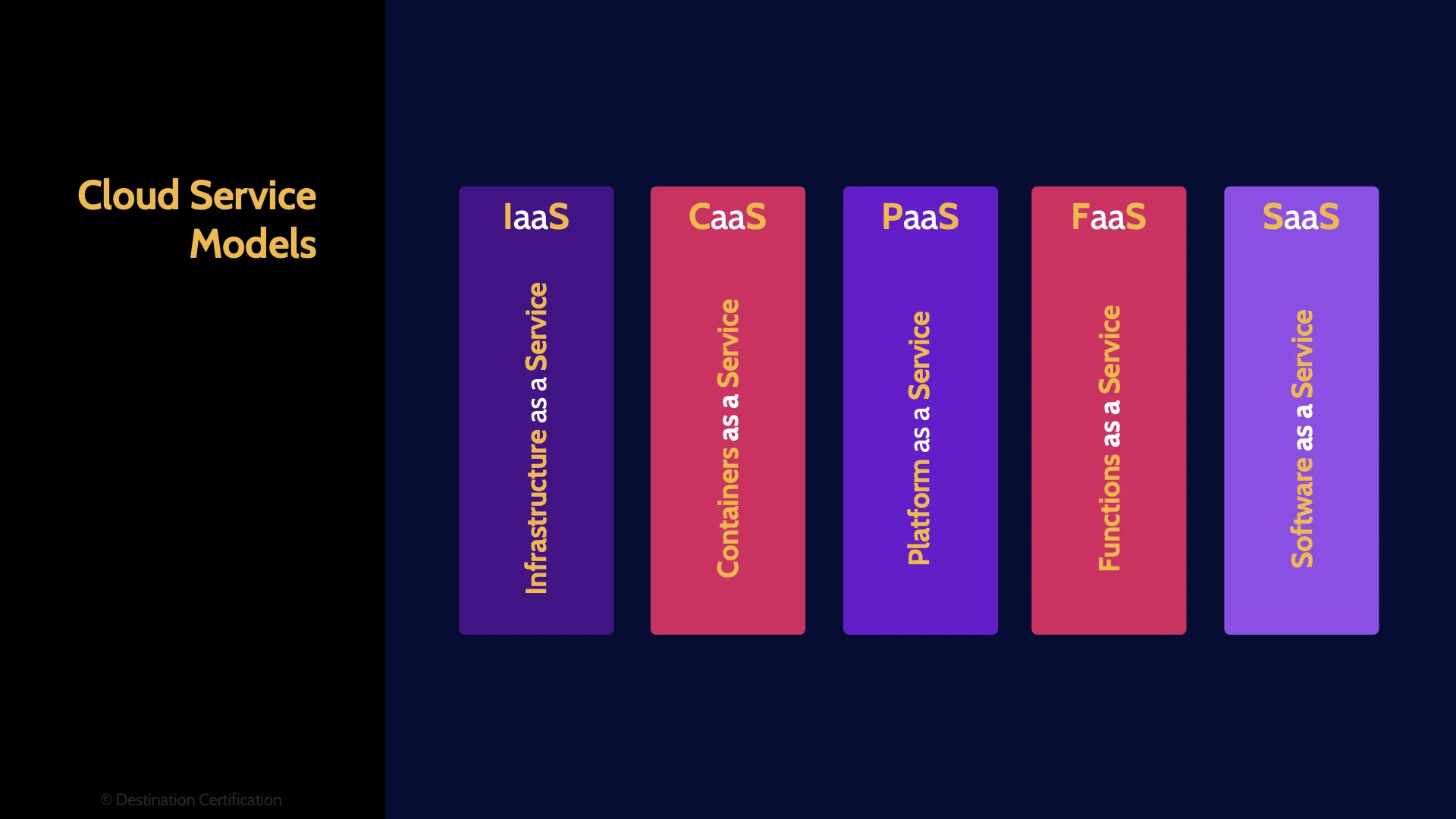

Those are the historical Service Models, we also need to talk about Containers as a Service and Serverless / or more aptly called Functions as a Service. Containers and serverless are becoming increasingly popular and have a lot of developer momentum behind them and there are now some basic questions on the exam about them, so we need to talk about how they fit in amongst Infrastructure, Platform and Software as a Service.

Containers as a Service fits in between Infrastructure as a Service and Platform. And serverless fits in between PaaS and Software as a Service.

I’ll talk in more detail about exactly what Containers and Serverless are shortly.

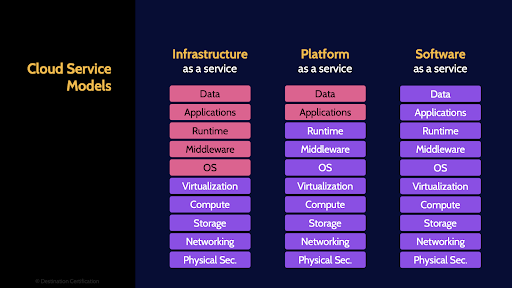

For any flavor of cloud service, it is critically important to understand who is responsible for what. If there are no clearly defined responsibilities as to who is doing what, you can generally assume no one is doing it.

This diagram shows varying levels of who is responsible for what for the different service models. You absolutely do not need to memorize the specifics of what the customer is responsible for, the pink boxes, and what the cloud service provider is responsible for, the purple boxes.

Just know this, responsibilities must be clearly identified and assigned. And the onus for doing this is on the customer. The customer ultimately remains accountable for the protection of any data and services they outsource to the cloud, so the customer must ensure responsibilities are clearly defined in contracts and Service Level Agreements, and the customer must ensure that the cloud service provider has controls in place which are operating effectively to meet the defined requirements. This assurance can be provided through service level reports or more commonly via SOC 2 reports which I talk about in the first mind map video for domain 6 – link in the description below.

Deployment Models

Now let’s talk about the major cloud deployment models

Public

Public cloud is cloud services that are available to anyone – to the public. A cloud service provider owns and operates cloud infrastructure that is open for use by the general public.

Private

Private cloud, on the other hand, is cloud infrastructure provisioned for exclusive use by a single customer. Private clouds can be owned and operated by the customer, or by a cloud service provider, and private clouds may exist on or off premise, and private clouds can be physically or logically separated from other customers. It’s complicated.

Community

Community cloud is cloud infrastructure that is only accessible by a small community of organizations that have similar shared concerns (similar security and regulators requirements for instance)

Hybrid

And hybrid cloud is simply some combination of public, private and community cloud. For instance, it is very common for large organizations to have their own dedicated on-premise private cloud for sensitive data, and they also use the public cloud for less sensitive data and workloads. Thus they have a hybrid model.

Virtualized Compute

Next let’s dig into a little more detail on how you can access virtualized compute resources. As I mentioned as part of the Resource Pooling characteristic, you don’t typically have direct access to a physical server and physical CPUs in the cloud, so how do you access compute – how do you run your code and applications?

You have access to various types of virtualized computing options and we’ll talk about Virtual Machines, Containers and Serverless.

Virtual Machine (VM Instance)

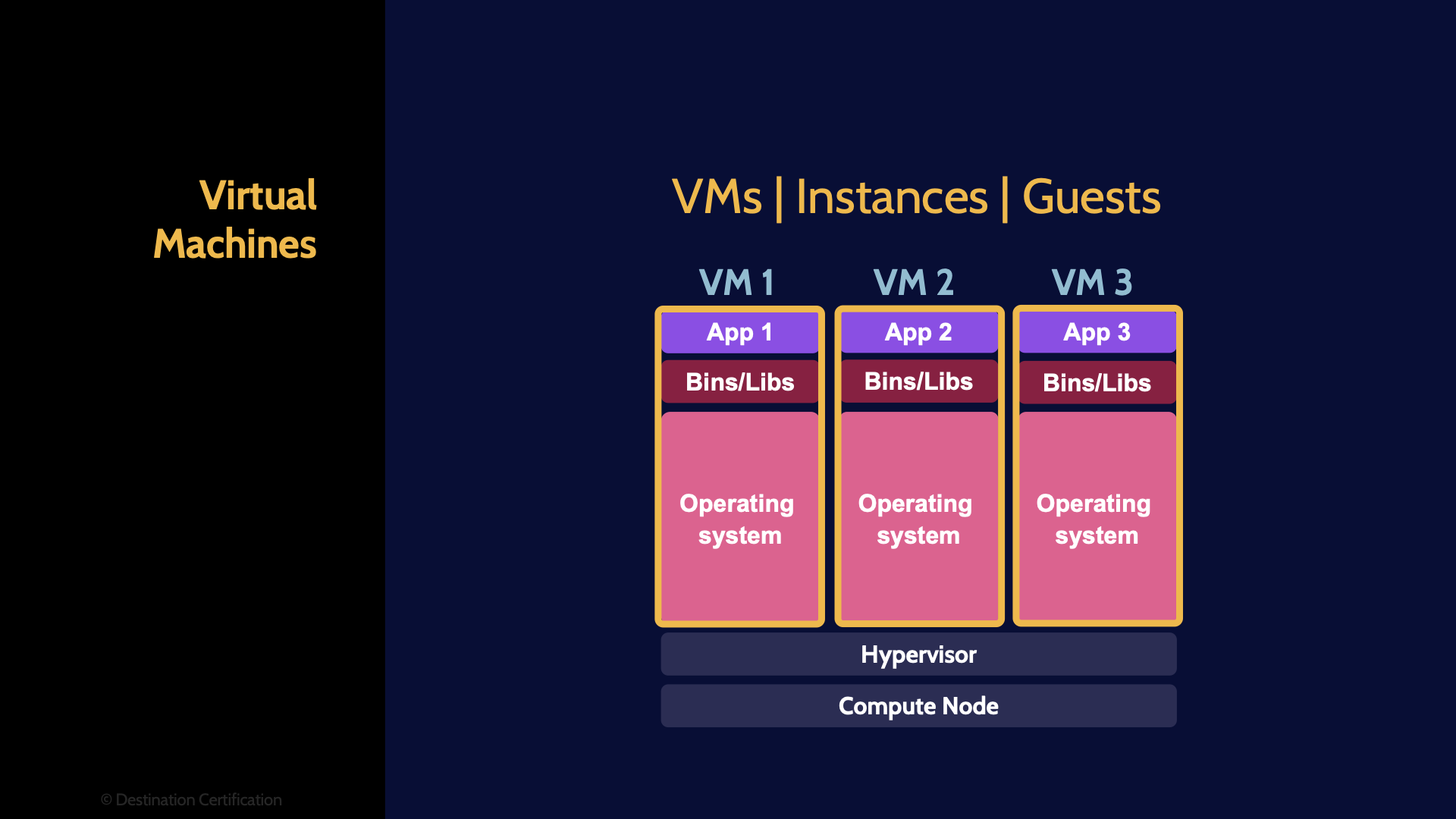

Starting with Virtual Machines. A virtual machine is an operating system and some applications running on top of a layer of abstraction instead of running directly on the physical hardware.

This is a good example of where a picture is probably worth a thousand words. As you can see in this diagram here at the bottom we have the “Compute Node” this is essentially the physical server comprising a CPU, a bunch of RAM, a Network Interface Card, etc. – the physical hardware. In a traditional computer the operating system would then be running directly on top of the hardware, but in a virtual machine we have a layer of abstraction between the hardware and the OS. What is providing this layer of abstraction? The Hypervisor. The Hypervisor is a piece of software. I like to think that the job of the hypervisor is to be a giant liar. Here’s why: an operating system expects to have total and complete control of all the underlying hardware, because a key job of an operating system is to control all the underlying hardware in a coordinated fashion. If we wanted to run two or more operating systems simultaneously on the same underlying hardware, it would never work, because each OS would be trying to have total and complete control and they would therefore conflict with each other.

And yet we do this all the time by creating a layer of abstraction between the hardware and the OS – the hypervisor, the giant liar. The hypervisor is simulating the underlying hardware to each OS. So each operating system thinks it is controlling the underlying hardware, but is in fact just controlling virtual hardware that the hypervisor is simulating.

This setup therefore allows us to run multiple operating systems and their applications simultaneously on one physical server, one compute node.

And as you can see in the diagram here, we have 3 virtual machines. Watch out on the exam that virtual machines might also be referred to as Instances or Guests.

Hypervisor (VM Monitor)

And these virtual machines are running atop the hypervisor, which might also be referred to as a VM Monitor.

One little hint all add here: make sure you’re familiar with the difference between Type 1: Hardware hypervisors, and type 2: Software hypervisors. Which is more secure and efficient? Type 1.

Containers

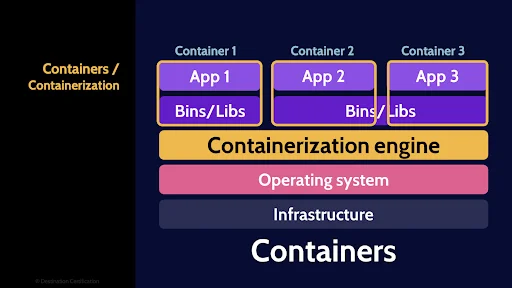

Alright, next let’s talk about Containers. Containers are highly portable code execution environments that run within an operating system, sharing and leveraging resources of that operating system.

The basic idea here is you can package your code inside a container. Much like an intermodal shipping container.

The cool thing about a shipping container, is once you’ve stuffed whatever you want to inside you can now move that container around the world via ship, truck, train, whatever you want without ever having to re-pack the container. It’s highly portable.

Same idea with a container that you can put your code in. You stuff your code in the container and then you can run that container on your Mac laptop, an on-premise Linux server, the Windows Azure Cloud, The Google Cloud – containers make your code highly portable.

Container Engine

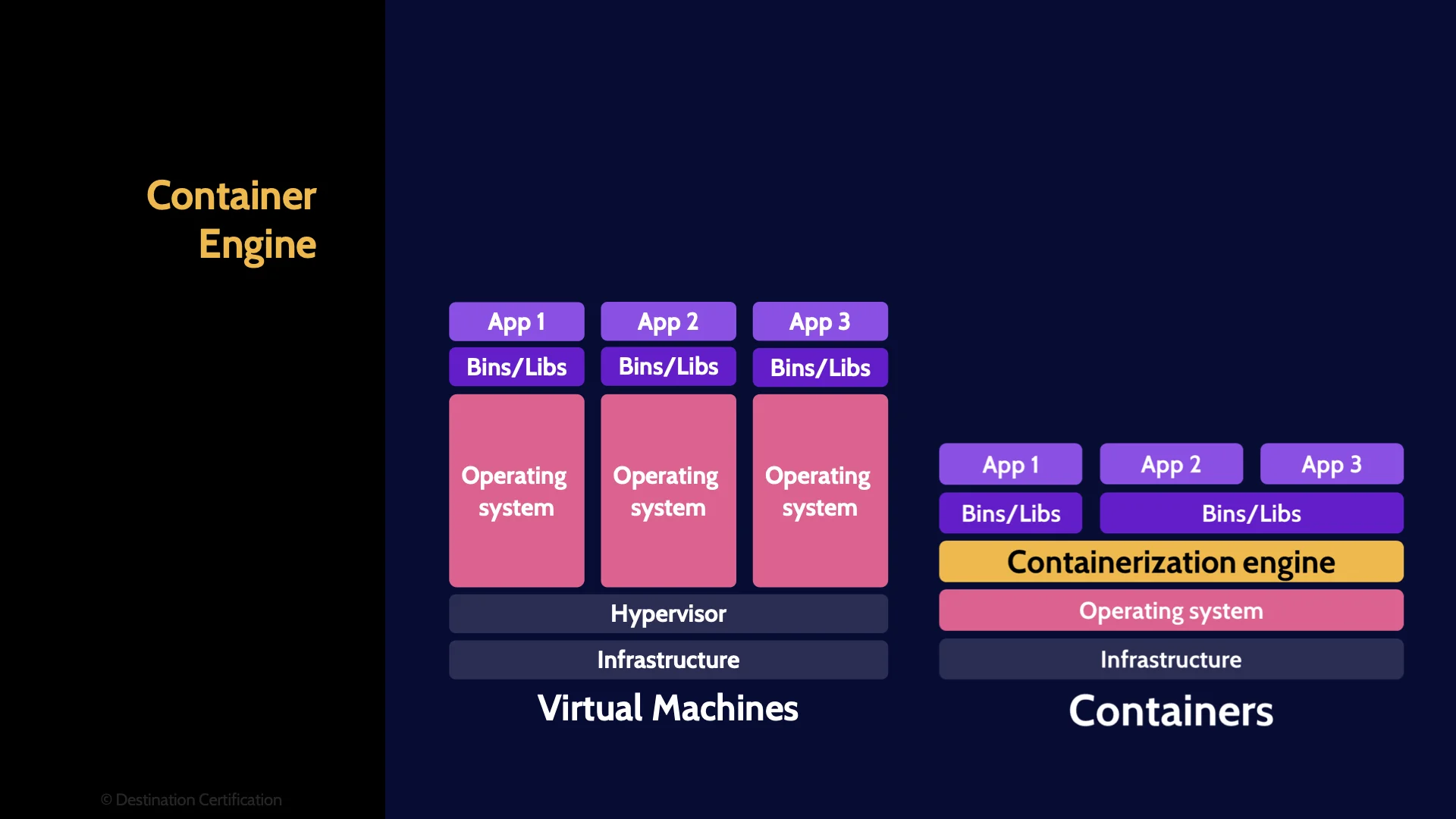

Just like with virtual machines, there is a layer of abstraction that a container runs on top of. The difference is where this layer of abstraction is located.

With virtual machines, the abstraction is between the hardware and the operating system. With containers, the abstraction is between the operating system and the containers.

This layer of abstraction is called the containerization engine.

Authorization is where a system determines what functionality a user will be allowed to access. The Insecure Authorization vulnerability, therefore, refers to doing a poor job of this authorization step potentially allowing an attacker to bypass the authorization and/or grant themselves access they are not entitled to. To prevent this vulnerability: authorization should be performed by the back-end server, and not the mobile device, and the server should verify that any requests from a mobile device are permissible based on what the user is authorized to access.

Serverless

And the final type of virtualized compute we are going to talk about is Serverless – or more correctly called Functions as a Service. Before we talk about serverless though, I think it’s helpful to understand a fundamental concept about serverless – and that is about separating an application into a bunch of interconnected functions.

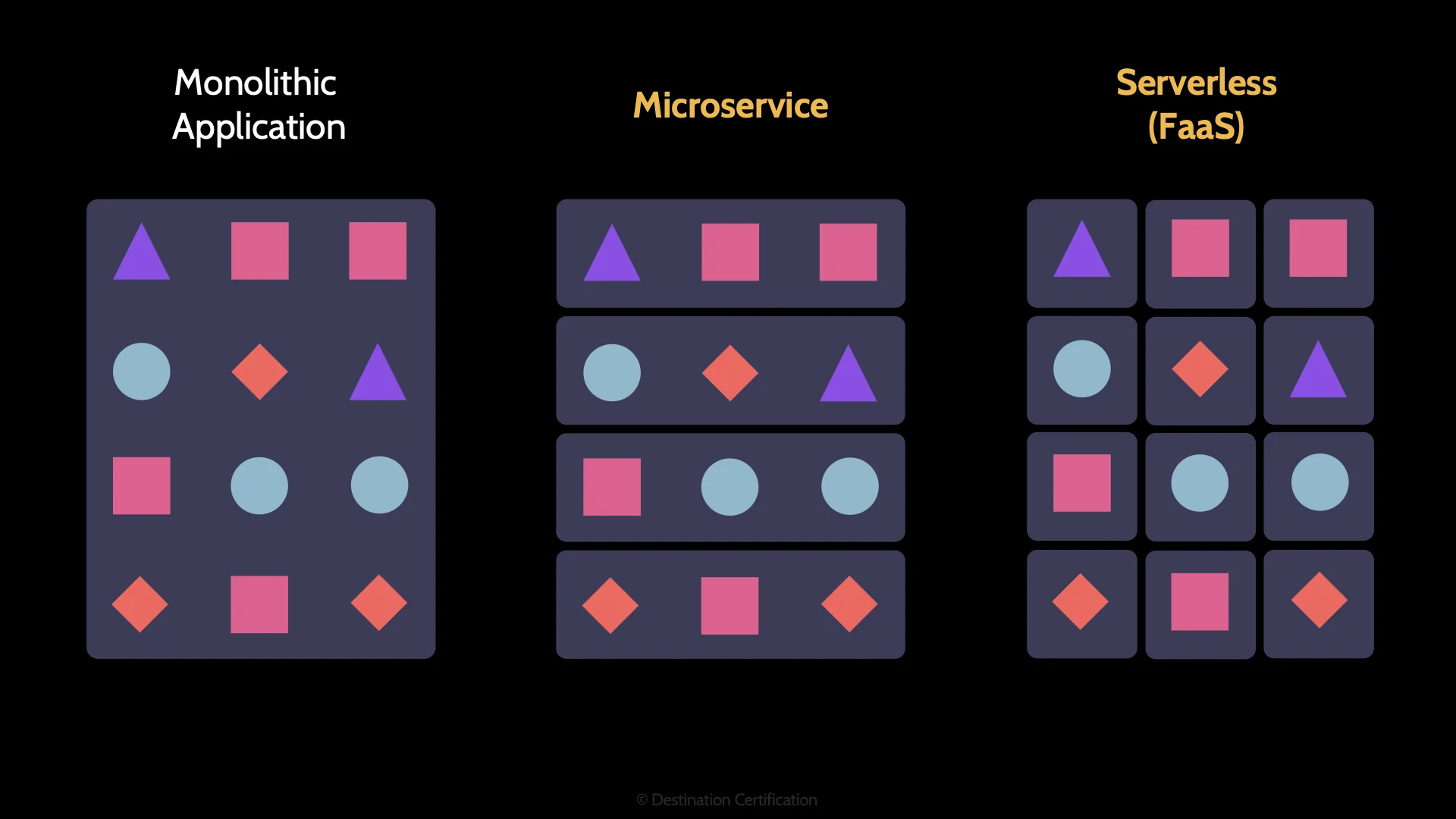

Take a look at this diagram here. A more historical way of developing an application is to create a monolithic application. This is where all the functions of the application – all the coloured shapes – are packaged together into a large executable. A disadvantage of this approach is that if the demands, the usage of the application grows, it can be difficult to scale the performance of the application. And often only certain functions or groups of functions need to be scaled.

Enter the next approach: Microservices. The idea here is you take the same application, the same functionality, but you break the application down into groupings of functionality called microservices. One of the advantages of this, is that it’s now easier to scale the application as you can focus on scaling whichever microservices you need to.

Taking this decomposition a step further, we arrive at functions as a service. Again, the same application, providing the same functionality, but we’ve broken the application down into individual functions which talk to each other via API calls.

This is a fundamental aspect of serverless: Simple functions are written and stored in the cloud, and these functions can be called as much or as little as desired. As a developer you basically don’t have to think about the infrastructure at all. If you want to call a function a million times a second or once every 3 weeks, it is exactly the same function. And if you never call the function you pay nothing.

I’m obviously glossing over a huge amount of fascinating functionality here, but again, the CISSP exam is not a cloud exam, so that basic definition should be sufficient.

Identity Provider

Now we are going to spend a fair bit of time talking about identification, authentication and authorization in the cloud. The use of cloud basically destroys the last vestiges of the formerly pervasive practice of organizations having a well-defined perimeter and tightly controlling access to their internal trusted network.

When an organization moves to the cloud, this concept of a trusted internal network essentially disappears.

Identity is the new perimeter in the cloud.

In the cloud you should assume that all traffic is a potential threat. There is no trusted internal network anymore. Therefore, as security professionals we must ensure that all traffic, all users are very thoroughly verified so we know exactly who is accessing what.

This approach is often referred to as the zero-trust model for security and it requires very robust identification, authentication and authorization controls. So, let’s dig into these controls by first talking about where we can store user’s identities.

Local

The two main places where we can store our user’s identities are locally or in the cloud. Locally implies that some system, usually Active Directory, is being maintained by the organization on-premise – in the organization's own data center – to store user identities.

Cloud

And cloud obviously implies that a cloud service is being used to store an organization's user identities. Okta is a good example of a cloud-based identity provider.

Cloud Identity

Next, we have several options as to the types of identities that we can use.

Cloud

A cloud identity, is an identity which is created and managed solely in the cloud

Linked

Linked Identities are two separate identities, one in the cloud and one local. There is simply some indication of a linkage between them, BUT changes to one are NOT automatically synchronized to the other linked identity.

Synced

Synced identities are very similar. You have two identities, one in the cloud and one local. The key difference here is that these two identities are synchronized. A change to one identity is automatically reflected / synchronized in the other identity.

Federated

And Federated identities. A user has one identity that allows them to gain access to both local and cloud based services via Federated Access.

Roles

I’ve already mentioned that it is incredibly important to have clearly defined accountabilities and responsibilities in the cloud. Let’s define these terms

Accountable

Accountability refers to an individual who has ultimate ownership, answerability, blameworthiness, and liability for an asset. They are the owner of the asset. Accountability should be assigned to only one person for each asset, because ultimately, accountability means who is the throat that gets choked if something goes wrong – that is the accountable person. Accountability CANNOT be delegated. The accountable person can set the policies and requirements for protecting an asset and then delegate those responsibilities to others.

Responsible

Responsibility, therefore, means the doer, the person or multiple people that are in charge of the requirements that were defined by the accountable person. Multiple people can be responsible, and responsibility can be delegated.

Cloud Consumer

Let’s now talk about the various common roles in the cloud and their accountabilities and/or responsibilities. The cloud consumer is the customer, the person or organization that is using cloud services.

Owner / Controller

Individuals within the cloud consumer will be the owners, also known as data controllers, of any data that is stored in the cloud. And very importantly the owners, the data controllers, will be accountable for the protection of any data they store and process in the cloud. And remember the owner cannot delegate their accountability – they remain accountable even if they outsource data to a cloud provider.

Cloud Provider / Processor

The cloud provider, also known as the processor, is of course the cloud service provider. The cloud provider will be responsible for protecting consumer data in the cloud based on the requirements set by the data owner. The cloud provider will also be accountable for running their own infrastructure and protecting their own data.

Cloud Broker

Cloud brokers are middlemen. Cloud brokers are organizations that exist between the consumer and the cloud service provider, and brokers exist to do things like Aggregation, Arbitrage and Intermediation. Essentially to package together various cloud services for a consumer or to add additional functionality to a cloud service provider’s offering.

Cloud Auditor

And cloud auditors, rather obviously, are the people that no one likes because they show up to audit stuff and see if controls are properly designed and operating effectively. Btw, I’m allowed to say that because I have conducted a lot of cloud audits – and no one liked me when I did so.

Protocols

There are various protocols that can be used to enable identification, authentication and authorization in the cloud.

SPML

Service Provisioning Markup Language, SPML, is an XML based framework for exchanging provisioning information (setup, change, and revocation of access) between cooperating organizations. Basically, SPML standardizes and simplifies the process of provisioning access across multiple systems in multiple organizations.

SAML

The next three protocols all enable Federated Access. I talk about Federated Access in a lot more detail in the second video for domain 5 – linked below. SAML – the Security Assertion Markup Language, is a protocol that provides both Authentication and Authorization in Federated Access

OpenID

OpenID provides only Authentication

OAuth

And OAuth provides only Authorization capabilities

Migration

When an organization decides to move some of their systems and data to the cloud there is a lot they need to think about from a security perspective: how do they ensure proper access controls, confidentiality, availability and integrity of the data, portability, interoperability, reversibility, resiliency, and compliance, among many other things.

Data centric

One way to tackle this problem is to take a data centric view. Cloud consumers can focus on the data that they plan to migrate to the cloud. The classification of the data and therefore the security controls that need to be in place for each stage of the data lifecycle: creation, storage, use, sharing, archiving and destruction.

SLA

One important contractual tool that a cloud consumer can use to communicate their requirements to a cloud provider are SLAs, Service Level Agreements. SLAs are documented commitments by the Service Provider to a Consumer covering things like confidentiality, integrity, availability, responsiveness, and so forth. And SLAs are addendums to the overall Contract.

Forensics

Conducting forensic investigations in the cloud, especially the public cloud, introduces some significant challenges as well as opportunities.

Snapshot, Virtual Disk, Image

One of the primary sources of evidence when conducting an investigation in the cloud, is obtaining a copy of a VM instance or snapshot. A VM snapshot is a copy of a VM that preserves the state and data of a virtual machine at a specific point in time – very useful for investigations.

Less useful would be the VM volumes, or virtual hard drives. There is obviously a lot of data stored on a hard drive from a forensic perspective, but none of the volatile data in the CPUs cache or register, or data in RAM that you can capture with a snapshot.

And least useful from a forensic perspective would be a VM image. A pre-built virtual machine. Looking at an image can be useful to help you identify vulnerabilities, misconfigurations, etc. but the image does not contain any of the data of the running system once it is instantiated from the image.

Data Destruction

Alright one final interesting challenge I will talk about related to cloud: defensible data destruction. Many laws and regulations around the world require a data owner to ensure sensitive data, particularly personal data, is defensibly, demonstrably, destroyed.

Crypto Shredding / Erase

One possible method of defensibly destroying data in the cloud is known as crypto shredding or crypto erase. The idea is that the sensitive data is encrypted with an excellent algorithm like AES, and then every single copy of the encryption key must be physically destroyed. With no possibility of recovering the encryption key, the data has effectively been crypto shredded and is unrecoverable – therefore the data has been defensibly destroyed.

And that is an overview of Cloud within Domain 3, covering the most critical concepts you need to know for the exam.

If you’re finding these MindMaps helpful in your studies, you should check out the CISSP Guidebook we wrote. It’s called Destination CISSP: A concise guide.

We wrote our guidebook to be as concise as possible while still providing the details you need to know to confidently pass the CISSP exam. You can find more details on our guidebook and where to buy it here: https://destcert.com/cissp/guidebook-2024/

Link in the description below as well

If you found this video helpful you can hit the thumbs up button and if you want to be notified when we release additional videos in this MindMap series, then please subscribe and hit the bell icon to get notifications.

I will provide links to the other MindMap videos in the description below.

Thanks very much for watching! And all the best in your studies!