CISSP Domain 3 - Trusted Computing Base MindMap

Download FREE Audio Files of all the MindMaps

and a FREE Printable PDF of all the MindMaps

Your information will remain 100% private. Unsubscribe with 1 click.

Transcript

Introduction

Hey, I’m Rob Witcher from Destination Certification, and I’m here to help YOU pass the CISSP exam. We are going to go through a review of the major topics related to the TCB in Domain 3, to understand how they interrelate, and to guide your studies.

This is the third of 9 videos for domain 3. I have included links to the other MindMap videos in the description below. These MindMaps are one part of our complete CISSP MasterClass.

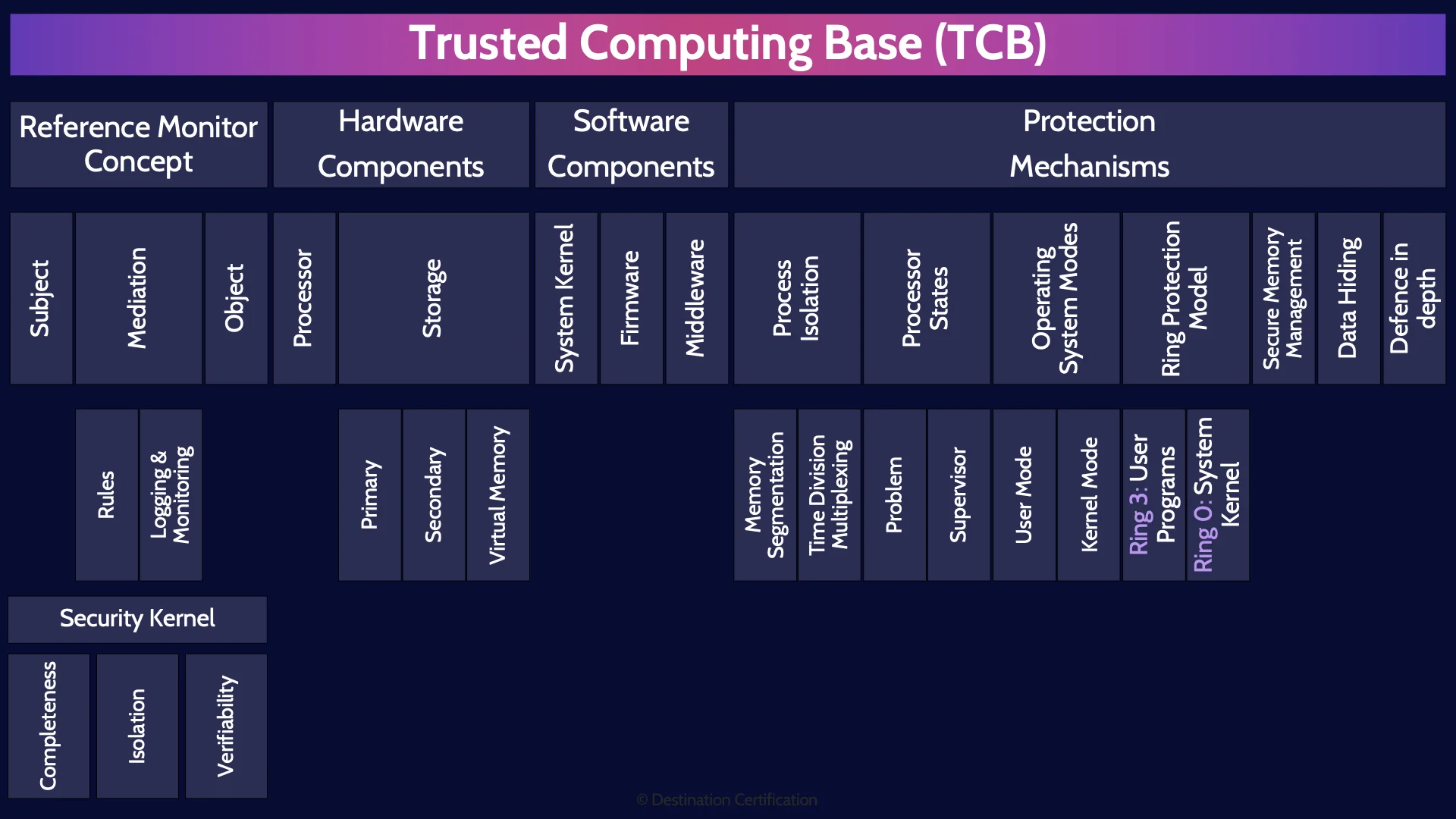

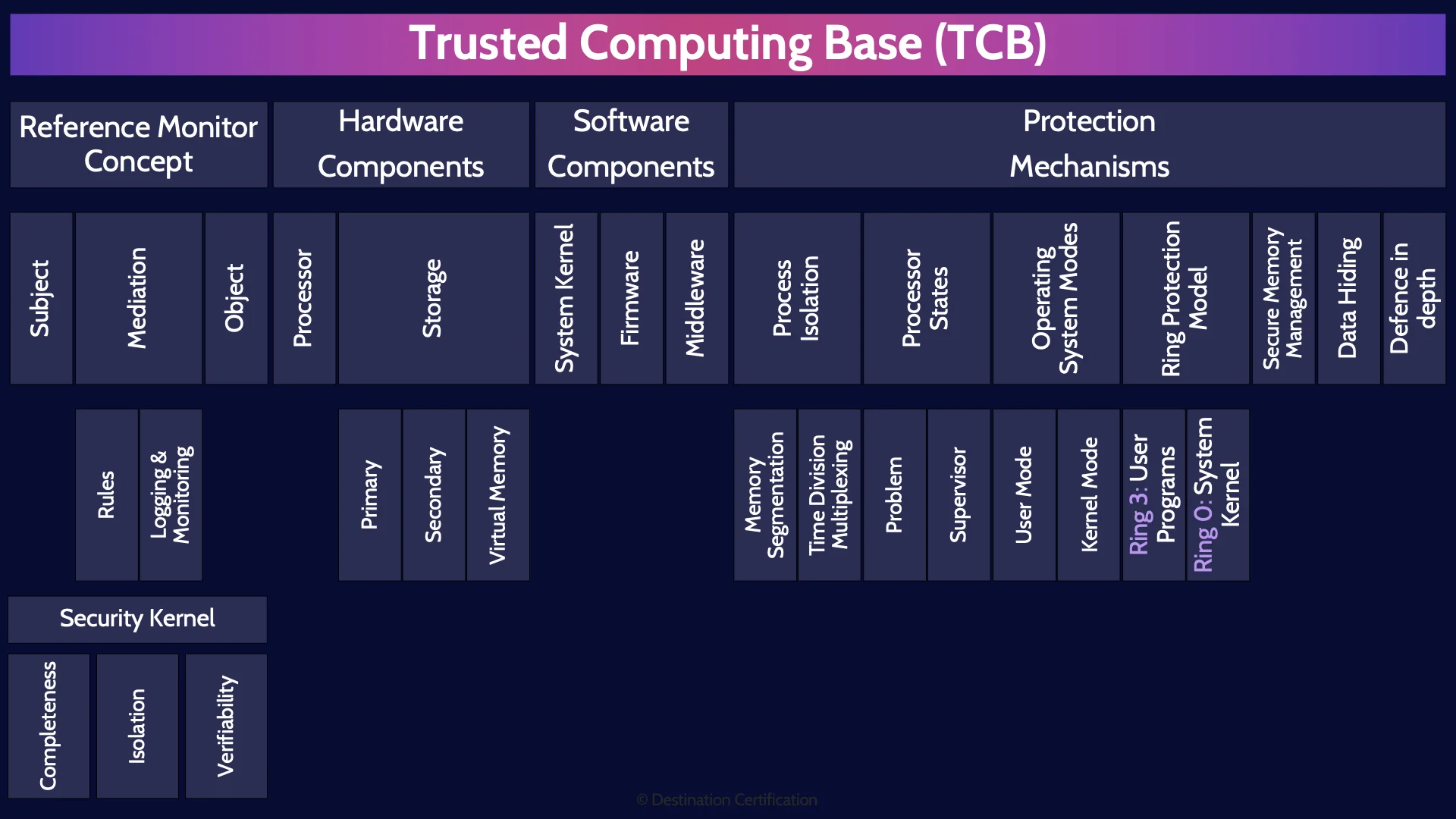

Trusted Computing Base (TCB)

The TCB, the Trusted Computing Base. This is a topic that is prevalent on the exam and not so much in day-to-day life. Honestly, who has ever had a chat about the TCB around the water cooler. No one.

But it is an important topic, so let’s start with a good definition. The TCB is the totality of protection mechanisms within a system or architecture that work together to enforce a security policy.

What is a totality you may be asking? It means the sum, the whole of something. So, remember this, the TCB comprises all of the protection mechanisms, such as people, processes, and technology that are responsible for protecting a system. Watch out for other words like collection, assembly, taxonomy, anything that means all the protection mechanisms. That is the TCB.

So the TCB is the collection of all the protection mechanisms. In the rest of this MindMap we are going to talk through some of those key mechanisms.

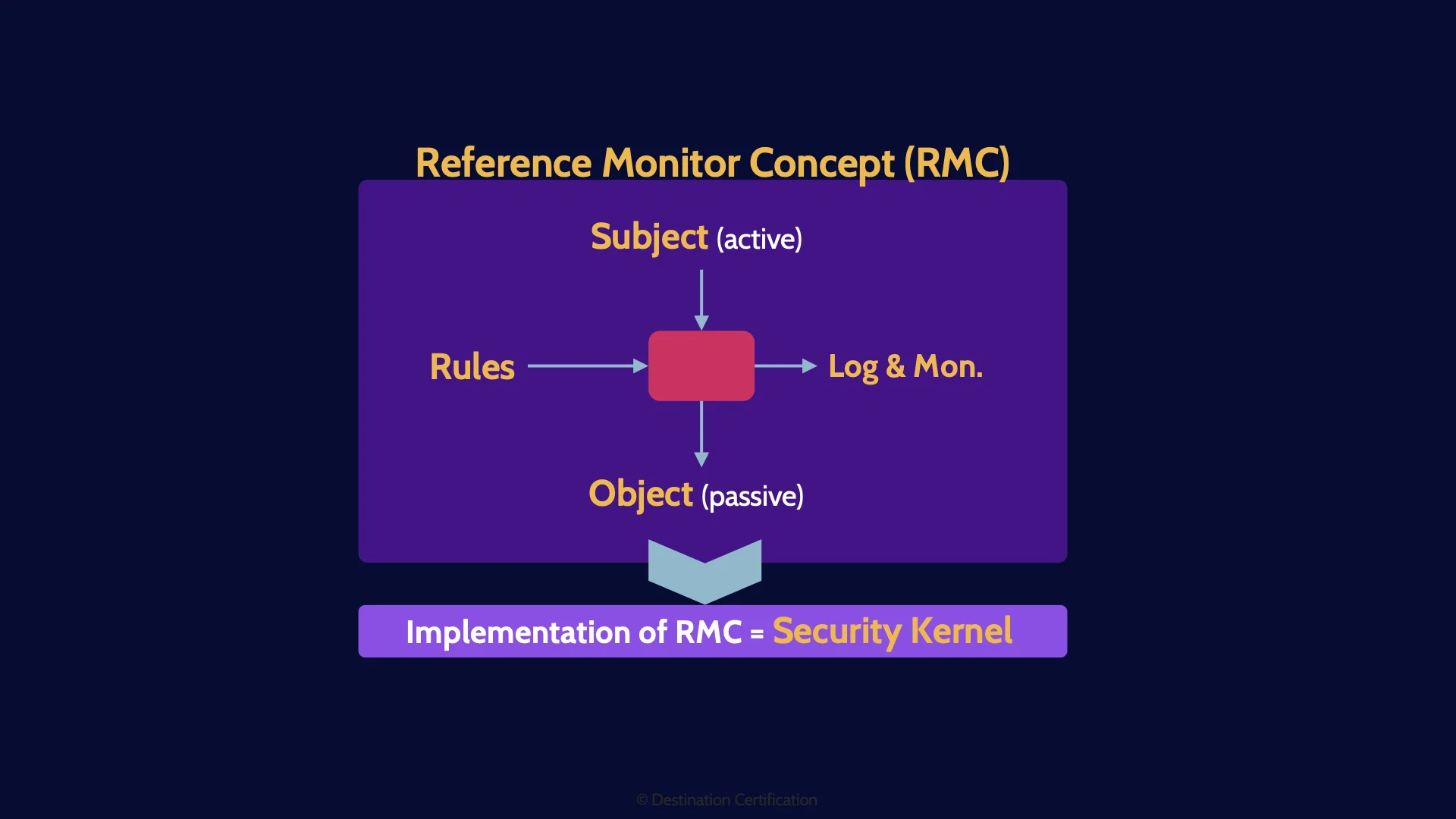

Reference Monitor Concept

Starting with the RMC. The reference monitor concept. The RMC is a really simple concept: if we want to have security, we have to control what subjects are allowed to access what objects, and what specifically the subject can do with the object.

Subject

So, what’s a subject? An active entity. Subjects are things like people and processes that want to access objects.

Mediation

We need to control, to mediate, a subject’s access to an object. This mediation can be all sorts of things, it could be a physical lock on a door controlling which people, which subjects, can access a building, the object. Or it could be the windows login prompt controlling if a user can access their computer. Or it could be the system kernel controlling which applications can access the network card. This mediation is anything that is controlling a subject’s access to an object.

Rules

Now this mediation must decide what subjects can access what objects. How does it decide? Based on a set of rules. We need to provide a set of rules that the mediation will make decisions based on. That by the way, is the functional aspect of the control.

Logging & Monitoring

Every control should also have an assurance aspect. We need to know if the mediation is working correctly on an ongoing basis. How do we get this assurance? We log and monitor.

Object

And the final piece of the RMC, is what is being accessed, the object. An object is a passive entity. The object is whatever is being accessed by the subject. So, objects can be things like databases, word files, buildings, and even other processes.

RMC Diagram

And that is the RMC. A subject, accessing an object, through some form of mediation, that is based on a set of rules, and all of this is logged and monitored to provide assurance that it is working correctly.

Now there’s one more important part I want to highlight about the Reference Monitor Concept. It is just a concept. To make it useful, we need to implement it.

Whenever you implement the Reference Monitor Concept, it is known as a security kernel.

Security Kernel

We use security kernels everywhere in security. Wherever we want to control a subject’s access to an object we control that access with a security kernel. Thus, you can find many examples of security kernels in hardware, firmware, and software.

Completeness

To have security, the RMC and its implementation, the Security Kernel, must satisfy 3 important principles. The first principle is completeness which means a subject is never able to bypass the mediation, for example, there are no backdoors.

Isolation

The second principle is isolation, which means the rules used to control the mediation are tamperproof. The rules can only be changed by someone who is authorized to do so. Just remember isolation means the rules are tamperproof.

Verifiability

And the third principle is verifiability, which means we are logging and monitoring to verify that the mediation is working correctly. This is the assurance aspect.

Hardware Components

Ok, now let’s look at some hardware components within computer systems.

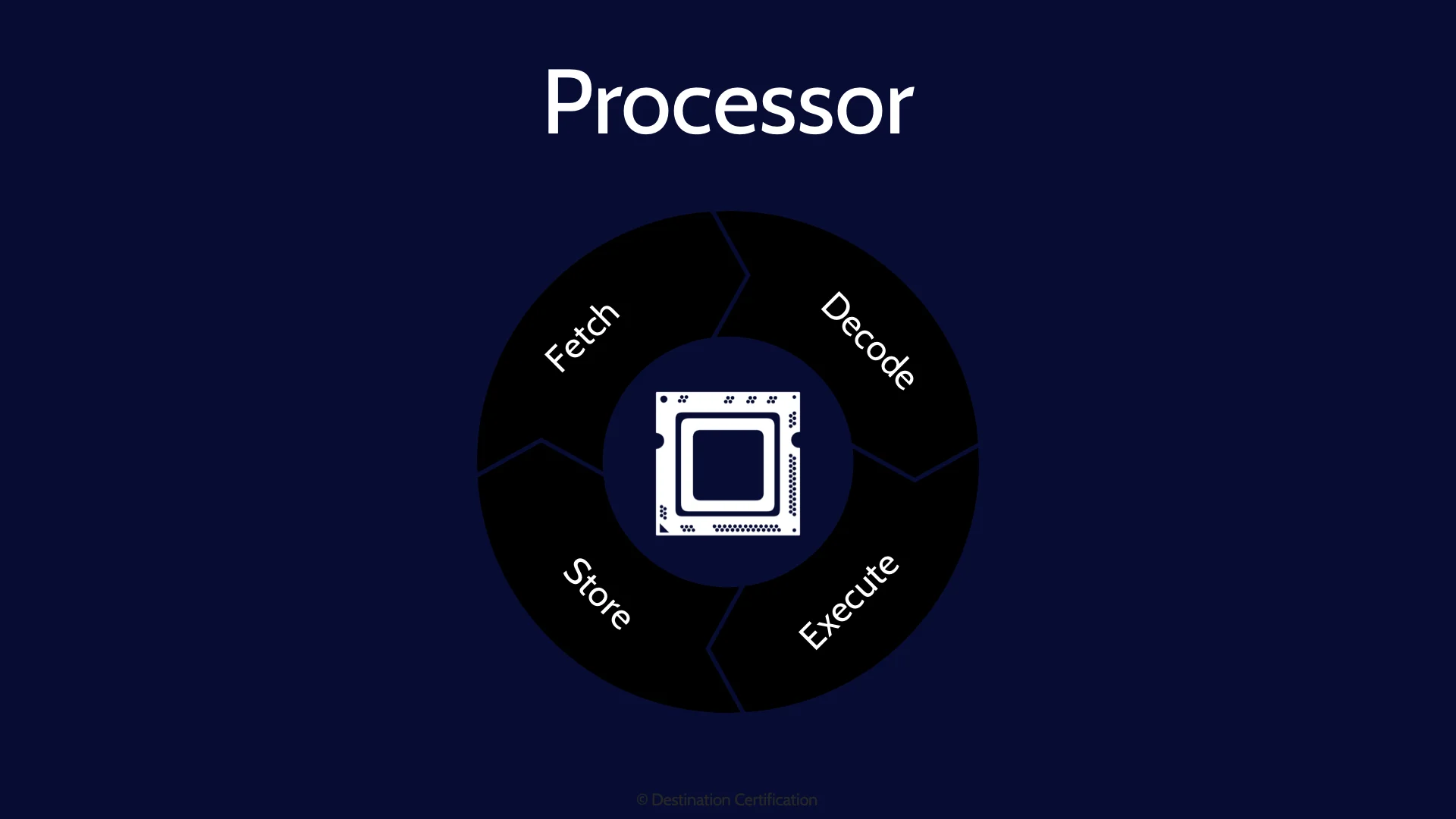

Processor

Starting with CPUs. Central Processing Units. CPUs are the brains of computers. The CPU fetches instructions, decodes them, executes them, and stores the results. And these fetch, decode, execute, and store steps run millions of times per second allowing us to run multiple extremely complex applications simultaneously via multi-tasking.

Storage

All the operating system and application code, and all the data in a system needs to be stored somewhere, so let’s talk about the different places that data can be stored and why.

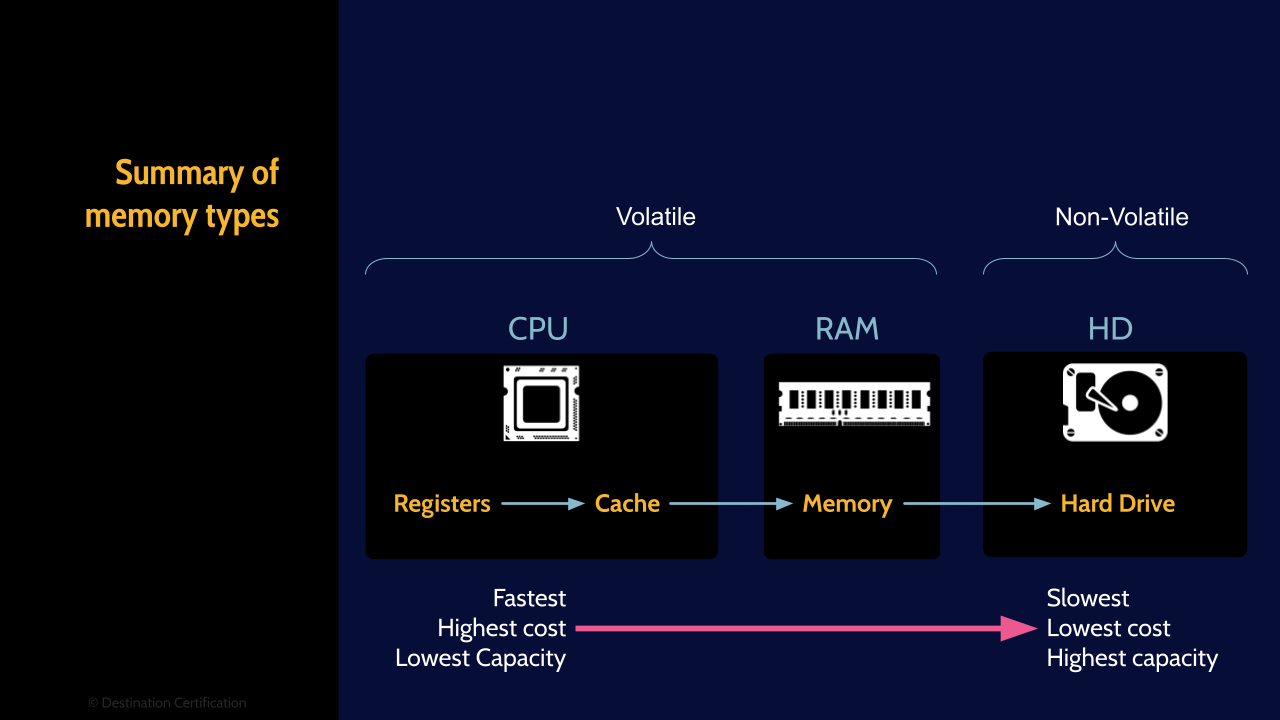

Primary

There are two major categories of storage. Primary and secondary. Primary storage is super fast, small and volatile. Examples of primary storage are the cache and registers built into the CPU, and RAM, Random Access Memory. All these types of storage are extremely fast, offer relatively little storage space and are volatile. What is volatile? It means that when the power is turned off, any data in volatile memory disappears into the ether. It is gone.

Secondary

Secondary storage is basically the inverse, it is much slower, offers much more storage space, and it is non-volatile. Examples of secondary storage are magnetic hard drives, SSDs (Solid-state drives), optical media like CDs & DVD, and tapes.

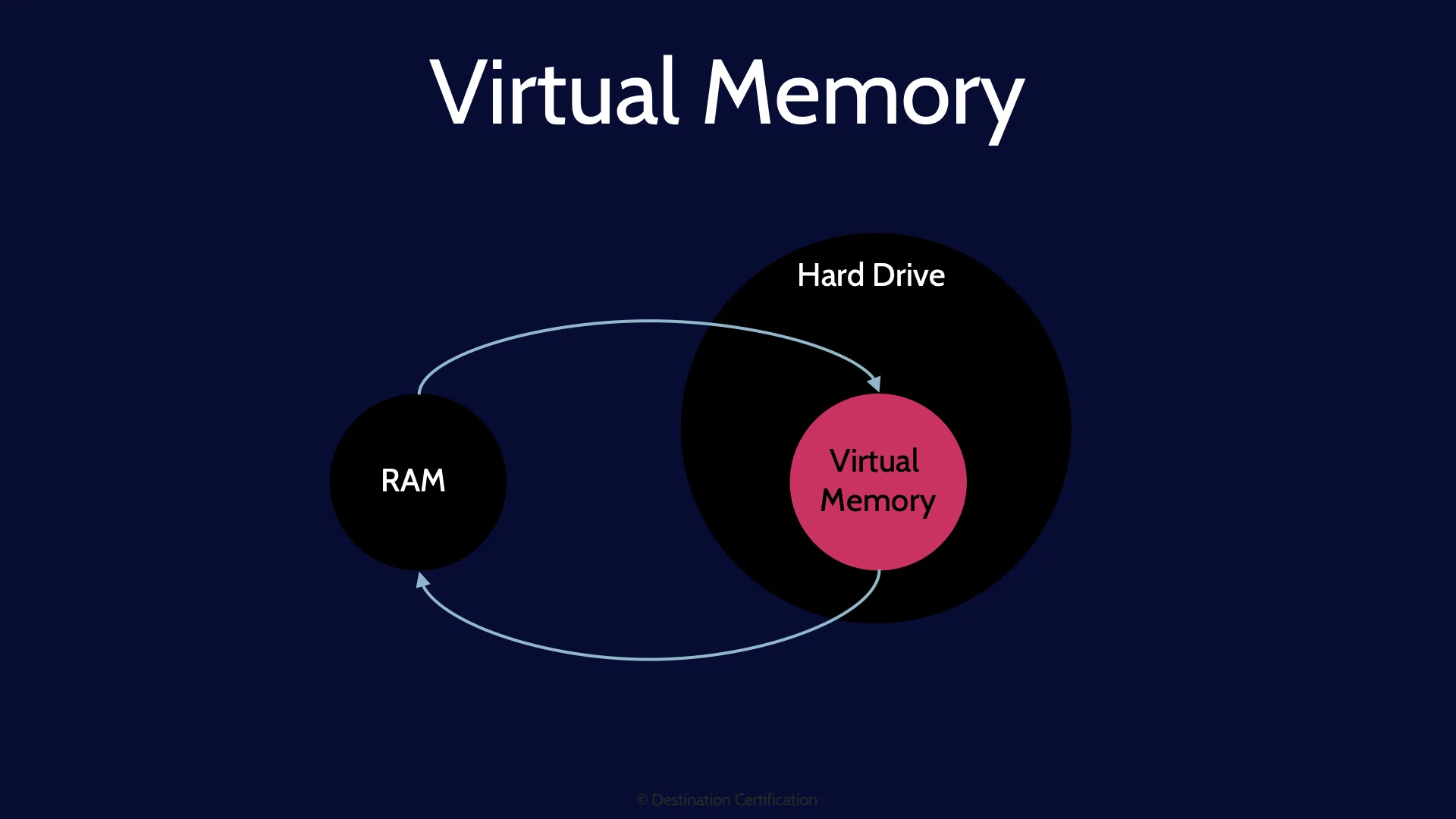

Virtual Memory

The last type of memory we will talk about is not actually a type memory, rather, it is a memory management technique. As I mentioned, RAM is relatively small. Whenever you load a program, open a new application, then some or all of the application code and required data will be copied from the slow hard drive into the much faster RAM. So that the code and data can be quickly accessed much more quickly by the CPU. The problem is that because the amount of storage space in RAM, 8gb, 16gb, 32gb, is relatively small, if you have too many programs open, you can run out of RAM and you get a blue-screen of death.

To avoid this problem, the operating system will temporarily transfer some of the less frequently used data from RAM back onto the Hard Drive. This process is often referred to as paging. And it essentially simulates having more memory in the system then you actually have. Virtual memory.

And here’s a diagram that depicts a few types of memory that we just discussed. Starting with the Fastest, highest cost and lowest capacity options on the left and moving on over to the slowest, but lowest cost & highest capacity on the right.

The memory built into the CPU - registers and cache, and RAM are examples of Volatile memory. And a hard drive is a perfect example of non-volatile memory.

Software Components

Let’s now move on to talking about some of the major software components within a system.

System Kernel

We’ll start with the operating system, the system of software that controls all the computer hardware and allows multiple programs to run. Examples of operating systems are Windows, Mac OS, Linux, Unix, iOS, Android, etc. The core of an operating system, the central part that controls everything is known as the system kernel. Make sure you do not confuse the system kernel, the core of the operating system, with the security kernel which is the implementation of the Reference Monitor concept.

Firmware

Firmware is software that provides low-level control of the underlying hardware. Firmware is stored on the hardware, typically in non-volatile memory such as ROM, Read Only Memory.

Middleware

Middleware is like software glue. Middleware acts as a translator between different incompatible applications enabling interoperability and allowing incompatible applications to talk to each other by passing messages through the middleware, the translator.

Protection Mechanisms

The next major topic is protection mechanisms, the concepts and software techniques that we use to secure systems and enforce security policies.

Process Isolation

All our modern-day systems are multi-tasking, meaning that multiple applications can be running at the same time. From a security perspective, we must make sure that these processes are isolated. That one application cannot interfere with another. There are two major methods that we can use to achieve process isolation.

Memory Segmentation

Memory segmentation means that each process, each application, is given its own memory space. And then a process is only allowed to access the data in its own memory space. The memory has been segmented.

Time Division Multiplexing

The second process isolation technique is known as time division multiplexing which is just a really fancy way of saying that we give each process access to a resource, like the CPU, or the network card, for a small slice of time, and then control is taken away from the first process and given to the second process. We have isolated the processes by only allowing them to access a resource one at a time.

Processor States

We talked about CPUs, the brains of a computer system. CPUs provide a couple of different levels of access to their functionality.

Problem

The lower privilege level is known as problem state. It is in this lower privilege level that most applications will run. They don’t have full access to all the CPUs capabilities, but enough for them to run. And by the way, why is it called problem state? Is the CPU having a rough day? No problem state refers to what CPUs are meant to do: solve problems. So, problem state is just the normal operating privilege level for the CPU.

Supervisor

The higher privilege level on a CPU is known as Supervisor state. The system kernel, the core of the operating system, will typically run in supervisor state, giving it full access to all the CPUs capabilities.

Operating System Modes

Speaking of operating systems, there are two common privilege levels that applications, processes, and code can run at.

User Mode

The lower privilege level is known as user mode, and most applications will run in this lower privilege level. User mode restricts what system resource the application can access, both preventing direct access to hardware and limiting the percentage of resources that the application can consume

Kernel Mode

The higher privilege level is known as kernel mode, and you can probably guess what runs in kernel mode: the system kernel. Kernel mode provides unrestricted access to the underlying hardware.

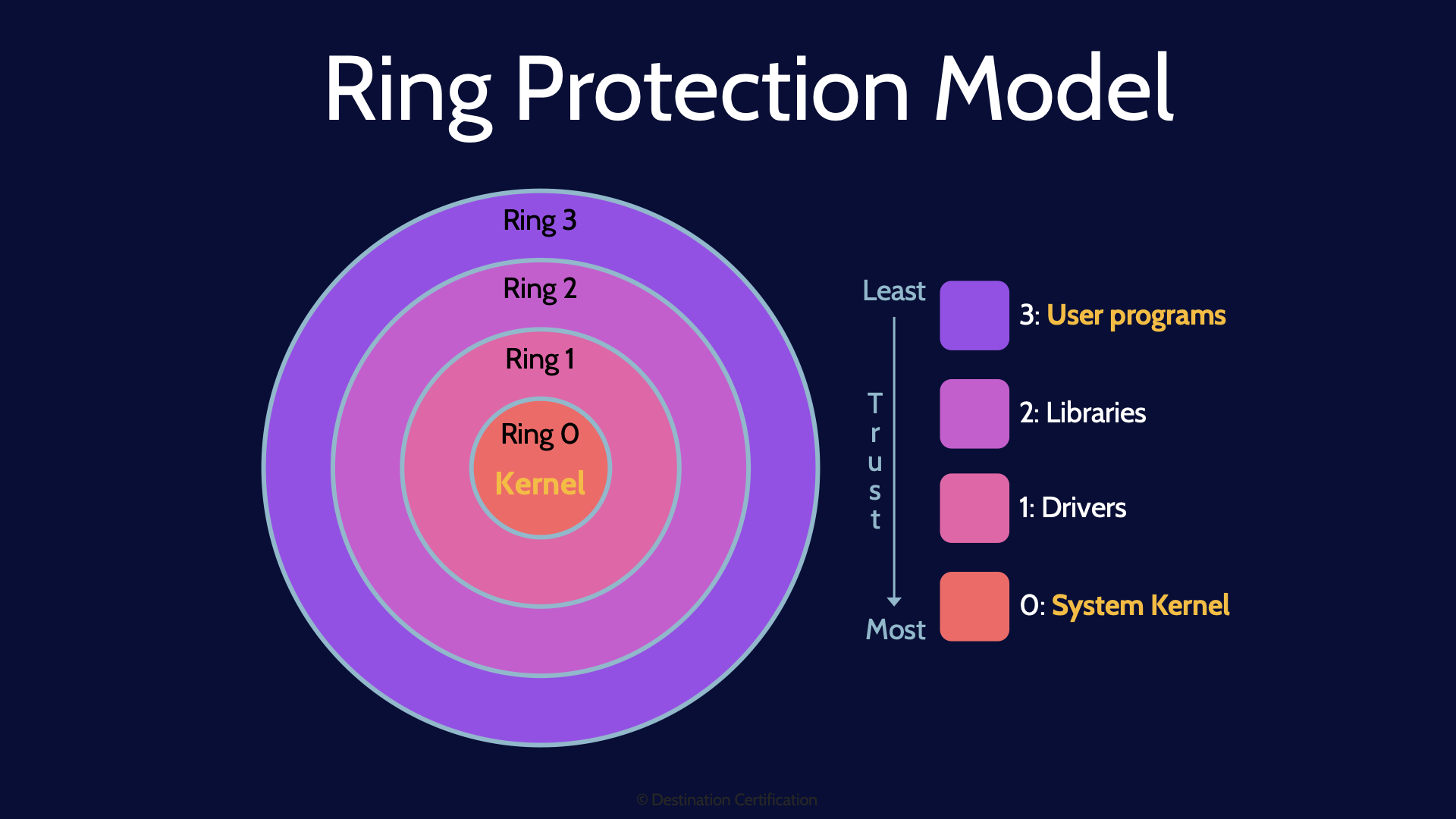

Ring Protection Model

Another way of thinking about how access to system resources is protected, and defining different levels of trust or privilege is the ring protection model.

The idea is that the center most ring, ring 0 is where the greatest privilege is granted to system resources, and thus ring 0 requires the greatest protection and access should be limited to the greatest extent possible. Each successive ring, ring 1, ring 2, and ring 3, each have less privileged access.

Ring 3: User Programs

Ring 3, the outermost ring, is where the least privilege is granted, and ring 3 is where most applications will run. Don’t worry about rings 2 & 1 as there is no consensus between different operating systems for exactly what these rings are used for – so you won’t get questions on them

Ring 0: System Kernel

Ring 0, the innermost ring, is where the most privilege is granted. Do remember that Ring 0 is where the system kernel runs. Ring 0 is also where firmware resides.

Secure Memory Management

Applications need to store and retrieve data when they are running. They will store this data in memory. As we have already talked about, there needs to be process isolation controls in place, in the form of memory segmentation, to ensure that one application cannot access another application’s data. Secure Memory Management is the idea of implementing a security kernel that mediates applications' access to shared memory to ensure memory segmentation and prevent problems like buffer overflows and memory exhaustion.

Data Hiding

Data hiding is the idea that if an application is running at a lower privilege level, then data at a higher privilege level will simply be hidden from the application. The application can’t try to access the higher security data if it doesn’t even know it’s there. What security model does this sound like the implementation of? The Bell-LaPadula Confidentiality model which I covered in the first video of domain 3 and I’ve linked to.

Defense in depth

And the final protection mechanism that we’ll cover here is the concept of defense in depth. Implementing multiple layers of security controls, and having a complete control at each layer, a combination of preventive, detective, and corrective controls such that a single failure at one layer does not expose or compromise the security of the asset. We can use many of the protection mechanisms we have just discussed in combination to achieve defense in depth.

And that is an overview of Trusted Computing Base within Domain 3, covering the most critical concepts you need to know for the exam.

I mentioned this in the first MindMap video in Domain 1, but it bears repeating: Passing the CISSP exam requires a lot more than just memorizing a bunch of facts. You also need to avoid thinking overly technically. You need to have the right mindset. You need to think like a CEO. Learn how to think like a CEO with this free training video: https://destcert.com/think-like-a-ceo/

Link in the description below as well.

If you found this video helpful you can hit the thumbs up button and if you want to be notified when we release additional videos in this MindMap series, then please subscribe and hit the bell icon to get notifications.

I will provide links to the other MindMap videos in the description below.

Thanks very much for watching! And all the best in your studies!